EIQ2 Introduction

Data Platform solutions are widely used within the largest and most complex businesses in the world. In challenging times, good decision-making becomes critical. The best decisions are made when all the relevant data available is taken into consideration. EIQ2 provides ETL and BI technology, based on Microsoft’s SQL server offering, performing a ‘light touch’ approach and enabling rapid development and deployment. As a dynamic, automated platform for the building of high performance, data integrated solutions, EIQ2 provides the ability to answer critical questions, by moving data from operational systems toward a single version of the truth and into a format that is current, actionable, and easy to understand.

The EIQ2 toolset is a cost effective, intuitive data management solution that collates and integrates data from the complete organisational estate, delivering a coherent and accurate foundation for business reporting and insights and delivers:

- An intuitive, easy-to-use, front-end application;

- Automation of code builds;

- Minimisation or elimination of re-work;

- Reduction in development time and resource costs

- Faster delivery of business benefit with a compressed cycle time; and

- Cloud based solution that is capital-expenditure free

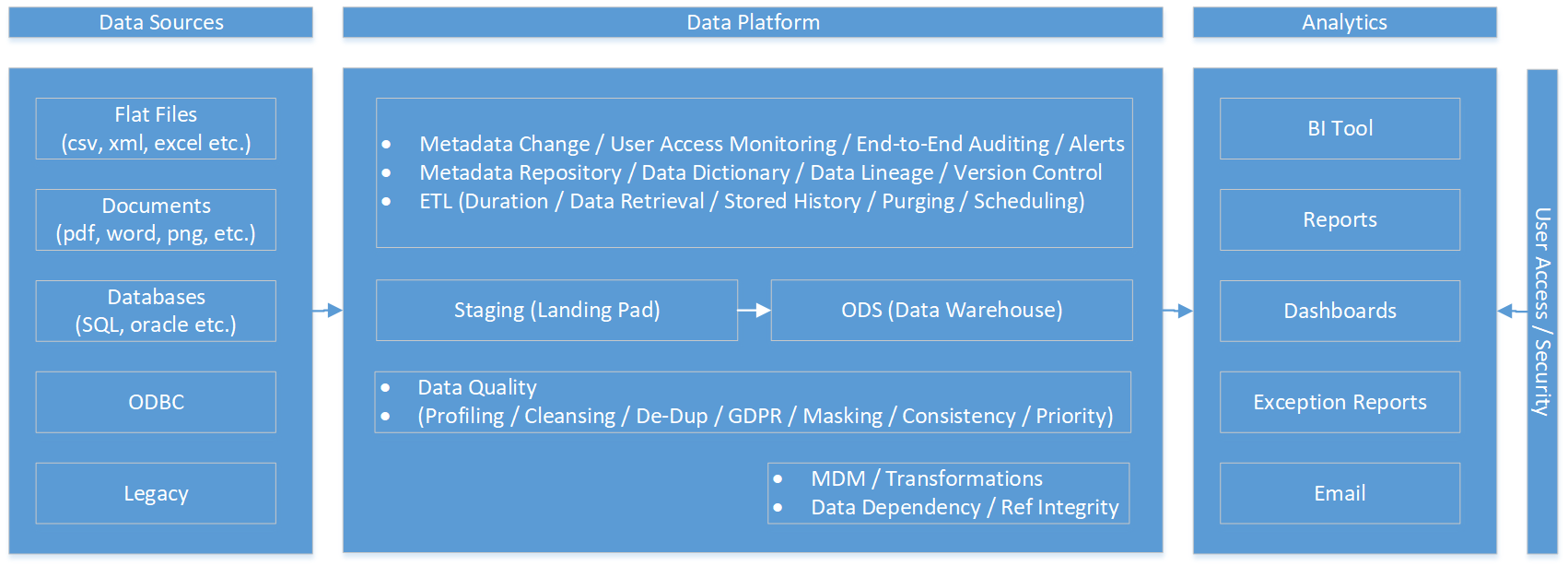

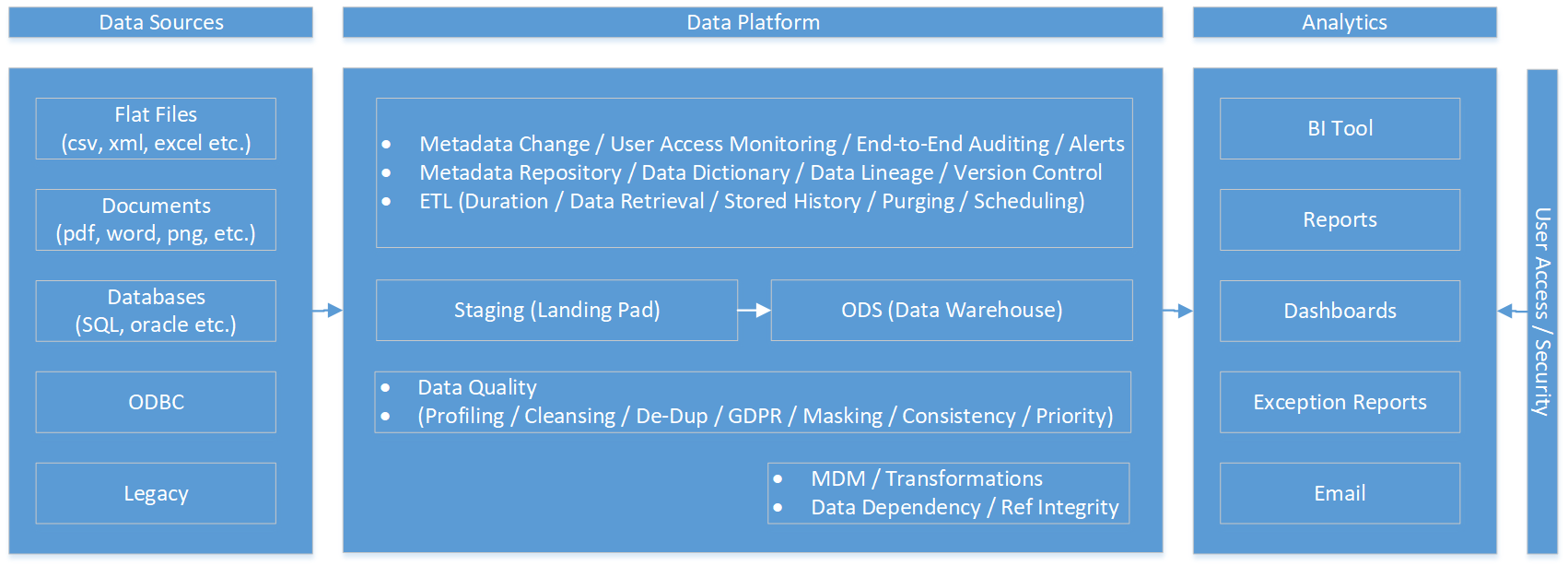

The EIQ2 process, which the toolset follows, is represented below with a following explanation for each key component.

Data Acquisition

- Consumes data from all production and local systems;

- Streamlines and integrates changed data from multiple data sources;

- Provides ‘changes only’ data for consumption;

- Provision of a scheduled task manager;

- Imports and analyses document related data files such as PDF’s, Word and Emails; and

- Provides both sequential and parallel data processing

Data Logic Layer

- An intuitive, easy-to-use, front-end application;

- Automation of code builds;

- Minimisation or elimination of re-work;

- Reduction in development time and resource costs

- Faster delivery of business benefit with a compressed cycle time; and

- Cloud based solution that is capital-expenditure free

Data Integration

- Provides the kernel for Data Governance and MDM;

- Provides time variant data for later usage;

- Provides consistent, defined and known data;

- Delivers data integrity and data security;

- Holds the lowest level of data (atomic data), so that later structures have effective and accurate calculations;

- Provides automatic alerts against abnormalities or poor data that is looked to a given Data Owner;

- Provides data profiling;

- Provides the capability for data fixes and cleansing can be flagged and reported on all disjointed data;

- Provides the capability to recognise duplicate data and to report that to the appropriate Data Steward;

- Eliminates data redundancy;

- Purges historical or invalid data;

- Reduces ‘technical debt’ by making that data available to users;

- Increases data consistency and confidence; and

- Decreases the risk of regulatory fines

Business Logic Layer

- Provides restructured data that makes business sense;

- Stores and defines business transformation rules;

- Provides a source for fact-based information to facilitate informed, strategic decision making and trend analysis;

- Provides the ability to produce in-depth, accurate reporting based on business rules, to show how the organisation is progressing and performing;

- Discovers relationships and derives dependencies amidst disparate information;

- Provides fuzzy data matching and pattern linkage;

- Delivers a holistic, lexicon-based approach for text data mining;

- Manages historical data intelligence;

- Provides data masking for test and uat;

- Centralises and manages the data complete data estate;

- Standardises data across the organisation and supports MDM;

- Ensures data integrity through surrogate key management; and

- Delivers a single, consistent and defined common data model

Delivery Layer

- Delivers Business Intelligence;

- Delivers a store of data that has been designed and built so that it can be readily interrogated by tools such as Power BI;

- Allows for self-service profile, interrogation and analytics of data;

- Generates output data sets to a secondary target;

- Generates automated exception reports;

- Simplifies data discovery and data heritage;

- Helps to unlock secrets, trends and data patterns;

- Provides the fast production of fact-based information;

- Delivers the ability to produce in-depth, accurate reporting using market toolsets;

- Automatic creation of a Data Dictionary; and

- Migration procedures

Generic Services

- Defines and manages a glossary of business and computing definitions;

- Centralises a metadata repository;

- Manages and validates historical metadata changes;

- Manages metadata deployments;

- Tracks data lineage and data dependencies;

- Self-documents;

- Tracks and traces runtime processes;

- Capture errors and warning inconsistencies through an integrated error mart that is email enabled; and

- Delivers an array of auditing administrative reports